The author is Zhu Pengfei, associate professor of the Machine Learning and Data Mining Laboratory of Tianjin University, and a master's tutor. He received his bachelor's and master's degrees from the School of Energy Science and Engineering of Harbin Institute of Technology in 2009 and 2011, and his Ph.D. in the Department of Electronic Computing from the Hong Kong Polytechnic University in 2015. Currently, he has published more than 20 papers in the world's top conferences and journals of machine learning and computer vision, including AAAI, IJCAI, ICCV, ECCV and IEEE TransacTIons on InformaTIon Forensics and Security.

The InternaTIonal Joint Conference on ArTIficial Intelligence (IJCAI) is a gathering of researchers and practitioners in the field of artificial intelligence and one of the most important academic conferences in the field of artificial intelligence. From 1969 to 2015, the conference was held in every odd year and has been held for 24 sessions. With the continuous development of research and application in the field of artificial intelligence in recent years, the IJCAI conference will become an annual event held annually from 2016. This year is the first time that the conference is held in even years. The 25th IJCAI Conference was held in New York from July 9th to 15th.

The venue of this conference is near the bustling New York Times Square, which is a reflection of the fiery atmosphere of the artificial intelligence field for several years. The conference consisted of 7 guest lectures, 4 award-winning speeches, presentations of 551 peer-reviewed papers, 41 workshops, 37 tutorials, 22 demos, and more. Deep learning has become one of the key words of IJCAI 2016, and there are three paper report sessions with deep learning as the theme. In this issue, we selected two related papers in the field of deep learning to select, and organized doctoral students in related fields, introduced the main ideas of the paper, and commented on the contribution of the paper.

Makeup Like a Superstar Deep Localized Makeup Transfer Network

In the application of face segmentation, beauty is a problem with a wide audience. Give a positive face, if you can give it the best makeup style and render it to this face, it will make it easier for girls to find the right style. The problem solved by the papers of Dr. Liu and others from the Chinese Academy of Sciences is to complete a more perfect face automatic makeup application, not only to give makeup to the face, but also to recommend the most suitable makeup for the user, to achieve higher User satisfaction.

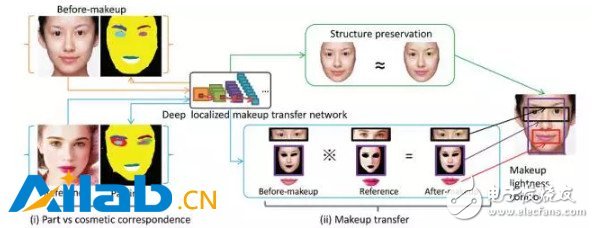

The article adopts the end-to-end method to complete the three steps of style recommendation and facial features to enhance the makeup migration. At the same time, the constraint of smoothness and facial symmetry is also considered in the loss function, and finally the state-of-the-art effect is achieved. The overall framework of the method is as follows:

Core method:

First of all, the style recommendation is to select the picture that is closest to the current face of the face from the database of makeup faces. The specific method is to select the smallest Euclidean distance from the current facial feature as the recommendation result, which is the feature map output by the network.

Then, the facial features of the five senses are extracted by using a full convolutional network to achieve face parsing. However, there is one more eye shadow in the makeup database. For the plain image, there is no problem with the eye shadow. Therefore, according to the eyebrow feature point, Eye shadow area. Since the part of the makeup segmentation is more important than the background, the network output loss selects the weighted cross entropy.

The weight is the weight value that maximizes the F1 score on the verification set. On the other hand, the faces in the database are positive and symmetrical, so the a priori constraint of symmetry is added. The specific method is to output this value and its symmetry after outputting the class probability prediction value of each pixel. Point and then take the mean as the final output:

Finally, the makeup migration. The makeup in this article includes foundation (corresponding to the face), lip gloss (corresponding to the lips), and eye shadow (corresponding to both eyes). The migration of eye shadow is special, because it is not a direct change of the eyes, the article designed a loss for this:

It means L2 Norm (the feature is the FCN first-layer convolution feature conv1-1 used from the facial features extraction part) for the makeup of the person in need and the recommended eye shadow of the face. Similarly, the loss to the face, upper lip and lower lip:

The difference is that it calculates the similarity of the features of the conv1-1, conv2-1, conv3-1, conv4-1, conv5-1 layers. The last given A that minimizes this loss (ie, the final face after makeup) meets the following conditions:

Where Rl and Rr represent the loss of the eye shadow of the left eye and right eye, Rf represents the loss of the foundation of the face, Rup and Rlow represent the loss of the lip gloss of the lower lip, and Rs represents the loss of the structure (the calculation formula is the same as the eye shadow loss, but in Sb, Sr The element values ​​are all 1). The smoothness of the face makeup can be further constrained by the following formula:

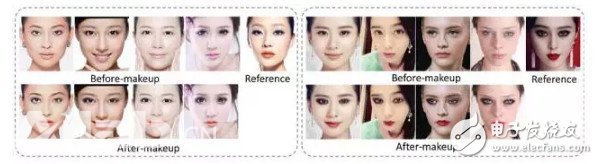

In this paper, the end-to-end deep convolutional neural network is used to learn the correspondence between the characteristic parts of the makeup and the makeup, and the process is simple. After considering the symmetry and smoothness of the face structure, it is achieved. The ideal results, some of the experimental results are as follows:

Feature Learning based Deep Supervised Hashing with Pairwise Labels

In information retrieval, the hash learning algorithm expresses complex data such as image/text/video as a series of compact binary codes (feature vectors consisting of only 0/1 or ±1), thus achieving time and space efficient recent Neighbor search. In the hash learning algorithm, given a training set, the goal is to learn a set of mapping functions, so that the data in the training set is mapped, similar samples are mapped to similar binary encoding (the similarity of binary encoding) Hamming distance metric).

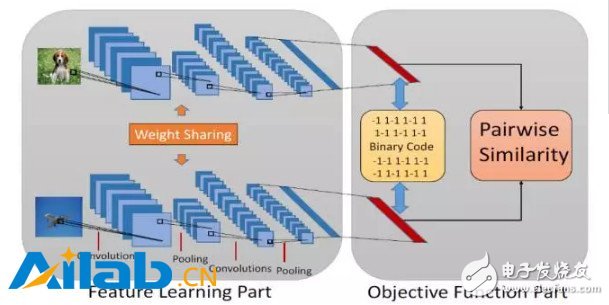

In this article by Li Wujun of Nanjing University, the author proposes a method of hash learning using a pairwise label. The usual image label may indicate which category the object in the image belongs to, or which category the scene depicted by the image belongs to, and the pairwise label here is defined based on a pair of images, indicating whether the pair of images are similar ( It is usually possible to define whether the pair of images belong to the same category to determine whether they are similar or not. Specifically, for the two images i, j in a database, sij=1 represents that the two images are similar, and sij=0 represents that the two images are not similar.

Specifically to this article, the author uses the network structure shown above, the input to the network is a pair of images, and the corresponding pairwise label. The network structure contains two sub-networks sharing weights (this structure is called Siamese Network), and each sub-network processes one of a pair of images. At the back end of the network, according to the binary code and pairwise label of the obtained samples, the author designed a loss function to guide the network training.

Specifically, ideally, the output of the network front end should be a binary vector consisting of only ±1. In this case, the inner product of the binary coded vectors of the two samples is in fact equivalent to the Hamming distance. . Based on this fact, the authors propose the following loss function, hoping to fit the pairwise label (logistic regression) with the similarity (inner product) between the sample binary encodings:

In practice, if you want the network front-end output to be a binary vector consisting of only ±1, you need to insert a quantization operation (such as the sign function) in the network. However, because the quantization function has a derivative of 0 or no guide on the domain, the gradient-based algorithm cannot be used when training the network. Therefore, the author proposes to relax the output of the network front end, and no longer requires the output to be binary. Instead, the network output is constrained by adding a regular term to the loss function:

Where U represents the "binary code" after relaxation, and the rest of the definitions are the same as J1.

At the time of training, the first item in J2 can be directly calculated from the label and Ui of the image pair, and the second item needs to be quantized to obtain the bi and then calculated. After training the network with the above loss function, when the query sample appears, only the image needs to be passed through the network, and the output of the last fully connected layer is quantized to obtain the binary encoding of the sample.

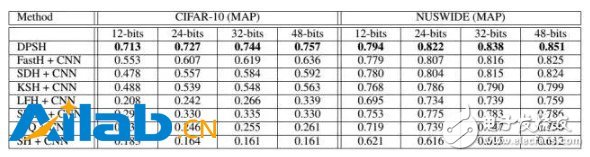

Some of the experimental results in this paper are as follows. The proposed method achieves the performance of state-of-the-art. Even in comparison with some non-depth hashing methods that use CNN features as input, there are significant advantages in performance. :

In general, the proposed method achieves significant performance improvement in image retrieval tasks by jointly learning image features and hash functions. However, because the pairwise label used in the paper only describes the pair of samples, there are only two similarities and similarities, which are relatively rough, which inevitably limits the application of the method. In the follow-up work, the author may consider using a more flexible form of supervisory information to extend the versatility of the method.

participants:

Hu Lanqing PhD student of the VIPL research group of the Institute of Computing Technology, Chinese Academy of Sciences

Yin Xiaoyu, Ph.D., VIPL Research Group, Institute of Computing, Chinese Academy of Sciences

Liu Wei, Ph.D., VIPL Research Group, Institute of Computing, Chinese Academy of Sciences

Liu Wei, Ph.D., VIPL Research Group, Institute of Computing, Chinese Academy of Sciences

Usually, there are certain restrictions on smart portable projectors in 2 aspects:

1. Size: Usually the size is the size of a mobile phone.

2. Battery life: It is required to have at least 1-2 hours or more of battery life without power connection. In addition, its general weight will not exceed 0.2Kg, and some do not even need fan cooling or ultra-small silent fan cooling. Can be carried with you (it can be put into a pocket, the screen can be projected to 40-50 inches or more, so sometimes we also call it a pico projector or a pocket projector.)

smart portable projector,smart portable projector for iphone,best smart portable projector 2020,cheap smart portable projector,mini smart portable projector wifi

Shenzhen Happybate Trading Co.,LTD , https://www.happybateprojector.com