Author: Akhilesh Kumar, Skylake-SP CPU Architect

The Intel® Xeon® Scalable Processor Platform represents the largest technological advancement in the data center platform space of the decade. On the eve of its release, CPU architect Akhilesh Kumar shared his insights into Intel's architectural approach to meeting data center needs and how it can be implemented through the latest "grid" on-chip interconnect of the scalable processor family.

A key focus of the data center architecture is to increase efficiency in order to achieve optimal return on capital and maximize data center output in limited space and power. Processors play a fundamental role in data center optimization, and the choice of processor architecture has a huge impact on scalability and efficiency. To achieve the desired balance between these factors requires vision, creativity and innovation, and these cannot be achieved overnight.

Intel's extensive product portfolio reflects its decades of experience in designing dedicated data center CPUs and platforms. From generation to generation, Intel continues to innovate core computing capabilities to improve processor performance. But our work does not stop there. It is equally important to improve the connectivity and scalability of all cores, fine-tune the memory hierarchy, and enhance I/O. These factors will ensure the scalability and efficiency of the computing, networking, and storage systems that make up the main building blocks of the data center.

Growing troubles: the challenges of scaleAdding more cores and connecting them to create a multi-core data center processor, this task may sound simple, but the CPU core, memory hierarchy, and I/O subsystems provide connectivity in these well-needed subsystems. Critical Path. These interconnects are like a well-designed highway with the right number of lanes and ramps at key locations to keep traffic flowing, rather than wasting time on the road to people and goods.

Increasing the number of processor cores and increasing the I/O bandwidth of memory and each processor to meet the needs of a large number of data center workloads – constitutes a challenge that must be addressed through creative architecture techniques. These challenges include:

· Increase the bandwidth between memory, on-chip cache hierarchy, memory controllers, and I/O controllers. If the available interconnect bandwidth does not scale properly with other resources on the processor, the interconnect becomes a bottleneck that limits system efficiency, just like frustrating peak traffic congestion. .

· Reduce latency when accessing data from the chip cache, main memory, or other cores. Access latency depends on the distance between chip entities, the path to send requests and responses, and the speed of interconnect operations. This is equivalent to commuting time in the extended city vs. compact city, the number of available routes, and the speed limit on the highway.

· Create energy-efficient ways to deliver data from the chip cache and memory to the core and I/O. Due to the greater distance and higher bandwidth between each component, the energy required to complete the data migration for the same task increases as more cores are added. In the case of transportation, as the city grows and the commuting distance increases, the time and energy wasted during commuting will make the available resources for production work less.

Intel is committed to innovative architecture solutions that are ahead of the challenge in creating more powerful and efficient processors to meet the needs of existing and emerging workloads such as artificial intelligence and deep learning.

Build future data center processorsIntel uses its experience and innovative technology to develop a new architecture for the upcoming Intel® Xeon® scalable processor to provide a scalable foundation for modern data centers. These new architectures provide a new way to interconnect on-chip components to increase the efficiency and scalability of multi-core processors.

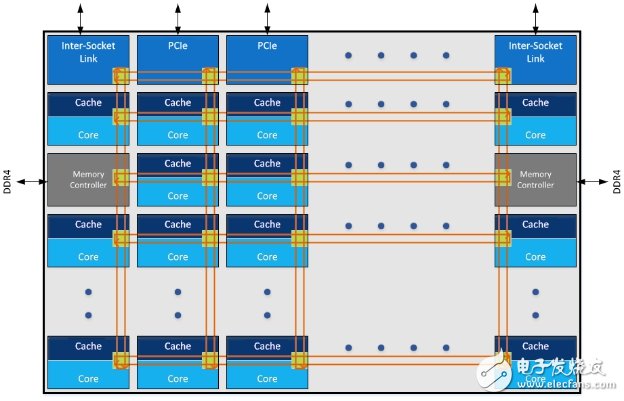

The Intel® Xeon® Scalable Processor features an innovative “grid†on-chip interconnect topology (Mesh) that provides low latency and high bandwidth between core, memory and I/O controllers. Figure 1 shows a schematic diagram of the grid architecture. The kernel, chip cache, memory controller, and I/O controller are organized in rows and columns. Each intersection is connected by wires and switches. In order to allow a turn. By providing a more direct path than the previous ring architecture, and more channels to minimize bottlenecks, the grid can operate at lower frequencies and voltages while still achieving very high bandwidth and low latency. This results in improved performance and enhanced energy efficiency, like a well-designed highway system that allows traffic to flow at optimal speed without congestion.

Figure 1: Mesh architecture conceptual representaTIon

Figure 1: Schematic diagram of the grid structure concept

In addition to improving the connectivity and topology of the on-chip interconnect, the Intel® Xeon® scalable processor also features a modular architecture with scalable resources for accessing on-chip cache, memory, IO, and remote CPUs. These resources are distributed throughout the chip, which minimizes resource limitations for "hot spots" or other subsystems. The modular and distributed nature of the architecture allows available resources to scale as the number of processor cores increases.

These scalable and low-latency on-chip interconnect frameworks are also important for the shared last-level cache architecture. These large shared caches are invaluable for complex multi-threaded server applications such as databases, complex physical simulations, high-throughput network applications, and hosting multiple virtual machines. The latency differences that can be ignored when accessing different cache libraries allow the software to treat the distributed cache library as a large, unified last-level cache. As a result, application developers don't have to worry about the different delays when accessing different caches, and they don't need to optimize or recompile the code to get a significant boost in their application performance. The benefits of unified low-latency access can also benefit memory and IO access, multi-threaded or distributed applications (interactions involving execution on different cores, and data from IO devices) do not need to be carefully mapped on a kernel within a slot The best way to get the best performance with a collaborative thread. Therefore, this application can take advantage of a large number of cores and still achieve good scalability.

to sum upThe new architecture with Mesh's on-chip interconnect provides a very powerful framework for integrating the various components of the Intel® Xeon® scalable processor—the core, cache, memory, and I/O subsystems. This innovative architecture delivers performance and efficiency in the widest range of usage scenarios and provides the foundation for continuous improvement that Intel and its unparalleled global ecosystem deliver to deliver the computing power and efficiency that data center customers expect. s solution.

Vane Air Winch,Air Operated Winch,Vane Pneumatic Winch,Pneumatic Tugger Winch

RUDONG HONGXIN MACHINERY CO.,LTD , https://www.rdhxmfr.com